How to Pick Winning Strategies?... Think Like A Scientist!

This article examines two data-focused checkpoints to help identify and select winning business strategies.

How do you select a business strategy?

And more importantly, how do you know if it is founded on facts?

In the scientific community, researchers follow the “scientific method” to drive insights from a hypothesis using experimental data to remove bias and intuition (Figure 1, top). Researchers are trained to test their hypothesis by performing multiple experiments that challenge and verify it with the understanding that the results may only partially validate or completely invalidate the hypothesis. A new or augmented hypothesis may be born and tested. Importantly, their hypothesis is founded on prior work by other scientists who applied the scientific method to their research, building a strong foundation for future work.

The business world can learn to tailor and adopt this methodology to identify a business strategy, avoiding costly mistakes or missed opportunities. In strategic planning, the analysts and the decision makers leverage quantitative and qualitative data to model the future, identify metrics for validation, adjust the model, implement, and set short- and long-term metrics & KPIs to monitor business performance (Figure 1, bottom).

This process requires a few things to go well, otherwise, the strategy is built on shaky grounds.

if the model is founded on accurate data

if it is adjusted for bias

if it is challenged against better alternatives

Depending on the business, at times, a strategy may be evaluated using internal and external data or simply selected on the basis of previous experience by the leadership. The results may indicate success with adjustments and pivots along the way, making strategic planning and validation a bit more open-ended and the entire process a bit more optional. In business, the c-suite members or their favorite consultancy firms have an established record of setting winning strategies, placing them at the top of the decision making process. Frequently, data is back fitted to support their hypothesis instead of challenging it, propelling their teams to rush to execute as to not miss the competitive advantage or revenue dollars on the table.

A Problem, Squared

Recently, I ran across a situation that made me think about the problems with the standard flow of identifying and selecting strategies in business.

In graduate school, just like everyone else in my cohort, I would read and re-read and read some more any and all publications and information about the landscape of my subject of research. This was an absolute necessity to ensure my hypothesis was founded on solid ground and to leverage the prior work to drive the field forward. As my fellow researchers would agree, it can be a bit of a painful process typing keywords into PubMed so everyone has a bit of a ritual to get through it. In my case, it required a large coffee (recommended by my advisor) and a bagel, sitting in my comfy reading chair strategically placed in the sunny corner of the lab in cold winters of Michigan. Many good memories but these days I found a better way!

The recent release of OpenAI’s ChatGPT has garnered a lot of interest in launching machine learning into the mainstream, with great potential for improving productivity and quality of work. Since I started playing around with my beta account, I was curious if the AI could have saved my life back in the day to summarize what I needed to know quickly and give me the references to go back and read each article in more depth. And bingo! It worked! For the most part…

I asked ChatGPT to use patent claims from a collaborative work in graduate school to identify new findings on use of biosensors, specifically, Quartz Crystal Microbalance (QCM) used in monitoring cell-cell interactions. ChatGPT was able to elegantly summarized my work in a single paragraph and highlighted that new research was done on this topic since our team’s published work in 2016. Of course I was very excited to see what would come up so I asked ChatGPT to provide me with the link to the article, which it did. What happened next is what prompted a series of cross validation when I couldn’t find the article. I thought… very strange, maybe I’m becoming a bit rusty at using PubMed- after all it’s been a few years since I can call myself a “scientist”. After several searches of the author, the journal, publication sources and other references, the only rational conclusion was that ChatGPT made an error. The AI is able to generally provide highly accurate information on practically any topic; however, at times it may generate inaccurate or misleading information. See my interaction with ChatGPT at the end of this post.

In summary, the lack of complete information and errors introduced by humans during supervised learning may result in ChatGPT to provide “the likeliest answer” and not “the correct answer”.

Essentially, humans and the machines are trained to prioritize an answer over no answer, at the risk of giving the correct answer… A compounding problem if the answer is used as the foundation to set new strategies.

Solution: A Two Checkpoint Workflow

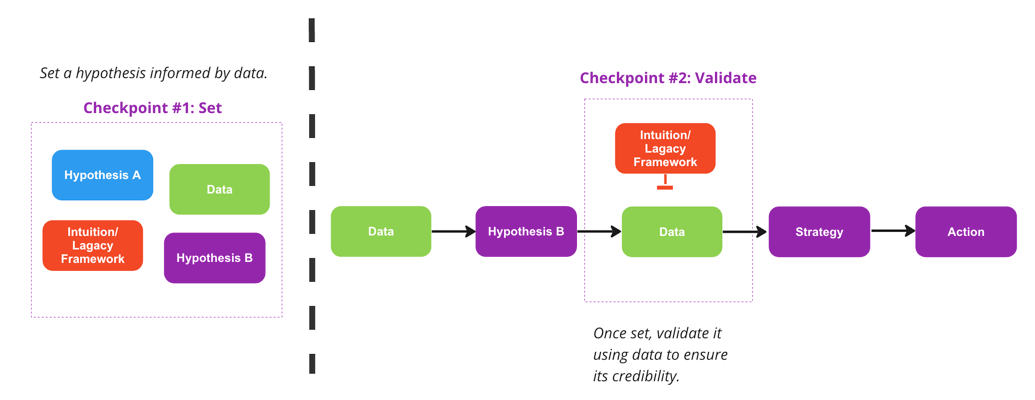

There are two gateways to introducing errors into the strategy workflow highlighted earlier (figure 1, bottom).

Setting the strategy based on past experiences, which are often are within the context of outdated economic and market conditions.

Using incomplete or incorrect data to analyze the strategy or outcomes.

Essentially, potential error or bias in these steps of strategic planning can derail the entire exercise by selecting a losing strategy or using “bad data” to build the model.

The solution to addressing their potential gaps is a two-checkpoint at these gateways to help prevent these missteps during planning and monitoring post-implementation.

Checkpoint #1: identify the hypothesis (pre-strategy). This way, the pre-strategy is optimized for the highest return on investment and competitive advantage at the lowest risk. This step maximizes the best potential strategy, which needs to be further tested and validated as the winning strategy.

Checkpoint #2: Use data from variety of sources to test and validate the pre-strategy, yielding the winning strategy for implementation. This step validate or challenges the pre-strategy as a second barrier vetting the strategy. Continuous data is collected post-implementation (action) to adjust the model as necessary.

These two checkpoints help reduce error and bias but will require staying open minded to allow the winning strategy to surface through the data - similar to how a scientist conducts every experiment with the understanding that he or she can be wrong!

I hope this article highlights the importance of using data to identify and test winning strategies. This approach allows the analysts and decision makers to identify and select the winning strategy not just the obvious ones or what they wish to be right!

As always, I would love to hear from you. Feel free to contact me at mida@stema-cg.com, via LinkedIn, or comment below. If you enjoyed this article, consider subscribing to receive The Lab as a newsletter.

Happy New Year!

-Mida

Note: OpenAI has added a disclaimer/warning to highlight this point.

“While we have safeguards in place, the system may occasionally generate incorrect or misleading information and produce offensive or biased content. It is not intended to give advice.”

Here is my conversation with ChatGPT: